Election integrity

Election Integrity

TikTok is a home for entertainment, connection, and expression. While that often means light-hearted creative expression, it can also include topics that impact the lives of our community, including political or election-related content. We welcome all expression that fits within our Community Guidelines, which are designed to help us keep TikTok a safe and welcoming place for everyone.

TikTok isn’t a go-to hub for breaking news, and we don’t accept paid political ads, but we are committed to combatting the spread of misinformation on the platform, including through supporting our community with education and authoritative information on important public topics like elections. Our goal is to help TikTok remain a place where authentic content thrives.

Collaborating with experts

We believe collaboration helps strengthen our efforts to protect against harm and misuse on our platform, which is why we work with an array of experts and organizations to help us promote safety on TikTok. We collaborate across all the markets we operate in with various organizations. For instance, during the 2020 US presidential election we worked with organizations such as the National Association of Secretaries of State, the US Department of Homeland Security, the Election Integrity Partnership, and our Content Advisory Council, an external group of leading experts who provide invaluable feedback on our policies and practices around election misinformation, hate speech, and more.

Through our global partnerships with fact-checking organizations, including Agence France-Presse (AFP), Animal Político, dpa Deutsche Presse-Agentur, Estadão Verifica, Lead Stories, Newtral, Facta, PolitiFact, Science Feedback, and Teyit & Vishvas News, we work to limit the potential for the spread of misinformation on our platform. These organizations work in tandem with our internal investigation and moderation team to help verify election-related misinformation.

Product features

Hashtag PSA Around the world we provide notices on many election-related hashtag pages to remind people to follow our Community Guidelines, verify facts, and report content they believe may violate our policies.

Reporting We’ve made it easy for people to report misleading content directly through our app, and these reports are sent to a dedicated US team that reviews them in accordance with our policies around misinformation.

Media literacy To help our community think critically about the content they create and the content they consume, we’ve developed educational TikTok videos that provide community members with the tools they need to become more savvy digital citizens.

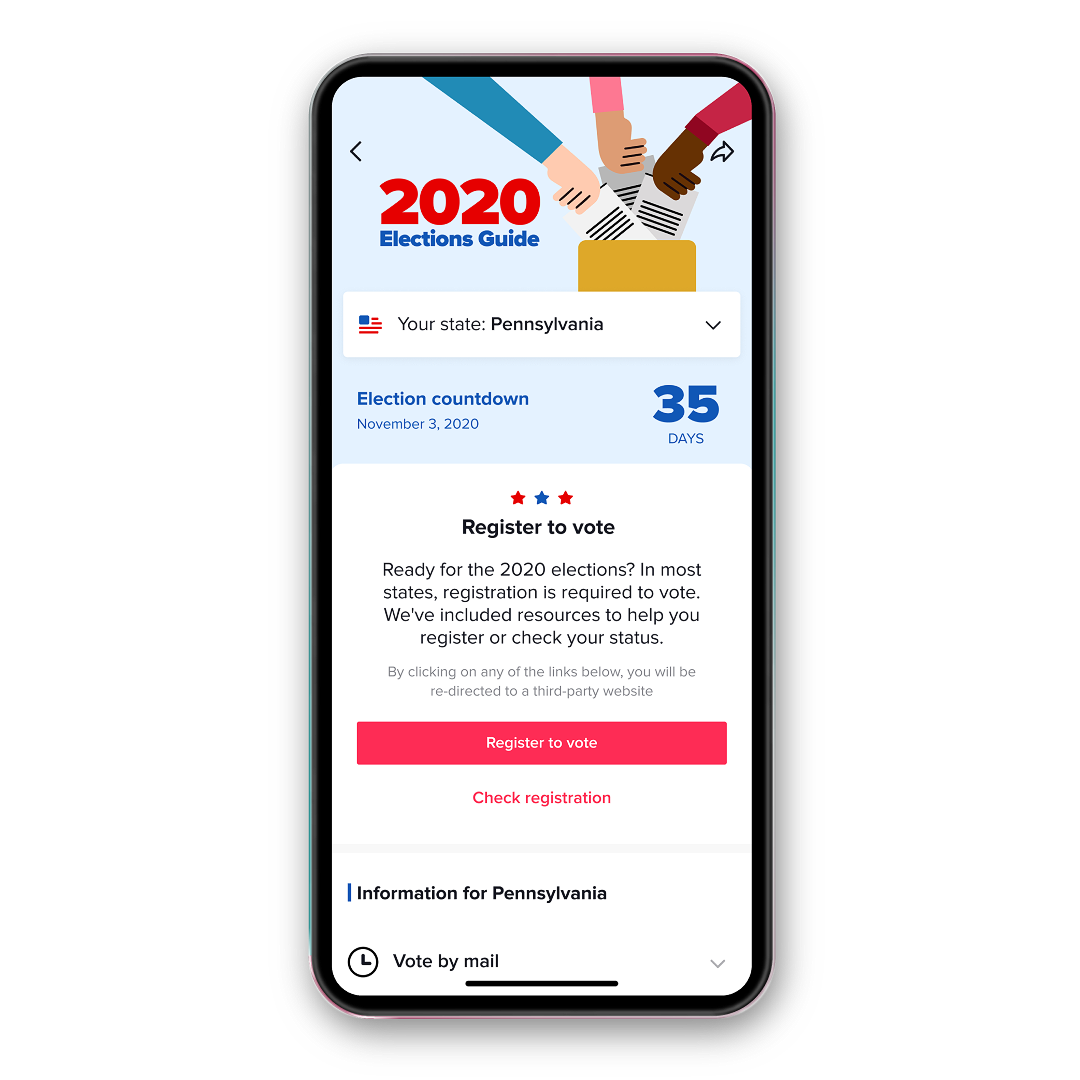

In-app guide For the 2020 US presidential election, we launched an in-app 2020 Elections Guide connecting millions of interested community members with authoritative information about the elections from the National Association of Secretaries of State, BallotReady, SignVote, The AP, and more.

Our policies

To help us deliver on our mission to inspire creativity and bring joy, we publish Community Guidelines to clearly outline the kind of content and behavior that is not allowed on TikTok. These policies apply to everyone who uses TikTok and all the content they post. This is a subset of those which apply most often when it comes to election-related content.

Enforcing our policies

We take multiple approaches to protect our community and limit the spread of harmful content and behavior as we work to promote an uplifting and welcoming app environment.

Our approach: Remove content for violation of our Community Guidelines

Rationale: When we become aware of content that violates our Community Guidelines, including disinformation or misinformation that causes harm to individuals, our community or the larger public, we remove it in order to foster a safe and authentic app environment.

Related to the elections: Some examples of what we would remove include: false claims that seek to erode trust in public institutions, such as claims of voter fraud resulting from voting by mail or claims that your vote won’t count; content that misrepresents the date of an election; attempts to intimidate voters or suppress voting; and more.

Our approach: Redirect search results and hashtags to our Community Guidelines

Rationale: Content and terms associated with content that violate our Community Guidelines may be limited across our platform to promote safety within the app.

Related to the elections:This may include redirecting terms associated with hate speech, incitement to violence, or disinformation around voter fraud, such as ballot harvesting.

Our approach: Reduce content discoverability, including by redirecting search results or making such content ineligible for recommendation into anyone’s For You feed

Rationale: Our recommendation system is designed with safety in mind. Some content – including spam, videos under review, or reviewed content depicting things that may be shocking to a general audience that hasn’t opted in to such content – may not be eligible for recommendation.

Related to the elections: This includes reviewed content that shares unverified claims, such as a premature declaration of victory before results are confirmed; speculation about a candidate’s health; claims relating to polling stations on election day that have not yet been verified; and more.

Our approach: No restrictions to this sort of content on the platform

Rationale: Some content – deemed as educational, scientific, artistic, or newsworthy – can carry value to the public interest. We define public interest as something in which the public as a whole has a stake, and we believe the welfare of the public warrants recognition and protection. However, we do not allow newsworthy content that incites people to violence.

Related to the elections: We may not take action on violating content that is deemed newsworthy. For instance, while we don’t allow violent content, we do not take action against content that depicts violence at a protest.

Our approach: Block the account from future livestreaming

Rationale: TikTok LIVE allows people further avenues for authentic connection with their audience, but community members must be over the age of 16 to livestream and are expected to maintain trust with their community. Community members who violate our Community Guidelines may lose access to this function.

Related to the elections: A violation may include a livestream that seeks to incite violence or promote hateful ideologies, conspiracies, or disinformation.

Our approach: Remove the account and its content

Rationale: Users that violate our zero tolerance policies or that have repeated violations may face account bans as they demonstrate a persistent misunderstanding of the platform’s code of conduct.

Related to the elections: For example, accounts that are found to be dedicated to the spread of election-related disinformation will be banned.

Our approach: Ban the device, including all linked accounts, and block the ability to create future accounts from that device

Rationale: We employ device bans for the most serious offenders who have violated our policies and demonstrated a refusal to adhere to our Community Guidelines and Terms of Service.

Related to the elections: Devices with multiple accounts that violate our zero tolerance policies, such as a network engaged in coordinated inauthentic behavior or election interference.

Case Study: 2020 US Elections

In autumn of 2020 we launched a 2020 US elections page in TikTok’s Safety Center where we provided information around our election integrity efforts as well as daily updates on the steps we took to minimize opportunities for interference and harm on the platform. This was TikTok’s first US presidential election, and we sought to offer transparency and accountability by bringing visibility into how we worked to counter misinformation, hate speech, and more. We recently published lessons we learned during the US elections process, and those learnings will shape how protect our platform during future elections.

From our Newsroom

Supporting our community on Election Day and beyond

Increasing transparency into our elections integrity efforts

TikTok launches in-app guide to the US 2020 elections

Combating misinformation and election interference on TikTok

TikTok’s “Be Informed” series stars TikTok creators to educate users about media literacy

How TikTok recommends videos #ForYou

Building to support content, account, and platform integrity